使用文件存储CFS

容器引擎 CCE 支持通过创建 PV/PVC,并为工作负载挂载数据卷方式使用百度智能云文件存储CFS。本文将介绍如何在集群中动态和静态挂载文件存储。

使用限制

- 创建的 CFS 实例和挂载点须和集群节点在同一 VPC 内。

前提条件

操作步骤

本文以 CFS 挂载点地址为 cfs-test.baidubce.com 为例。

动态 PV/PVC 方式挂载 CFS

方式一:使用 kubectl 命令行操作

1. 创建 StorageClass 和 Provisioner

dynamic-cfs-template.yaml 是一个 yaml 文件模板,包含了需要创建的集群资源信息。

dynamic-cfs-template.yaml 文件内容如下:

1kind: ClusterRole

2apiVersion: rbac.authorization.k8s.io/v1

3metadata:

4 name: nfs-client-provisioner-runner

5rules:

6 - apiGroups: [""]

7 resources: ["persistentvolumes"]

8 verbs: ["get", "list", "watch", "create", "delete"]

9 - apiGroups: [""]

10 resources: ["persistentvolumeclaims"]

11 verbs: ["get", "list", "watch", "update"]

12 - apiGroups: ["storage.k8s.io"]

13 resources: ["storageclasses"]

14 verbs: ["get", "list", "watch"]

15 - apiGroups: [""]

16 resources: ["events"]

17 verbs: ["create", "update", "patch"]

18---

19kind: ClusterRoleBinding

20apiVersion: rbac.authorization.k8s.io/v1

21metadata:

22 name: run-nfs-client-provisioner

23subjects:

24 - kind: ServiceAccount

25 name: nfs-client-provisioner

26 namespace: kube-system

27roleRef:

28 kind: ClusterRole

29 name: nfs-client-provisioner-runner

30 apiGroup: rbac.authorization.k8s.io

31---

32kind: Role

33apiVersion: rbac.authorization.k8s.io/v1

34metadata:

35 name: leader-locking-nfs-client-provisioner

36 namespace: kube-system

37rules:

38 - apiGroups: [""]

39 resources: ["endpoints"]

40 verbs: ["get", "list", "watch", "create", "update", "patch"]

41---

42kind: RoleBinding

43apiVersion: rbac.authorization.k8s.io/v1

44metadata:

45 name: leader-locking-nfs-client-provisioner

46 namespace: kube-system

47subjects:

48 - kind: ServiceAccount

49 name: nfs-client-provisioner

50 # replace with namespace where provisioner is deployed

51 namespace: kube-system

52roleRef:

53 kind: Role

54 name: leader-locking-nfs-client-provisioner

55 apiGroup: rbac.authorization.k8s.io

56---

57kind: ServiceAccount

58apiVersion: v1

59metadata:

60 name: nfs-client-provisioner

61 namespace: kube-system

62---

63kind: PersistentVolume

64apiVersion: v1

65metadata:

66 name: pv-cfs

67spec:

68 capacity:

69 storage: 5Gi

70 accessModes:

71 - ReadWriteMany

72 persistentVolumeReclaimPolicy: Retain

73 mountOptions:

74 - hard

75 - nfsvers=4.1

76 - nordirplus

77 nfs:

78 path: {{NFS_PATH}}

79 server: {{NFS_SERVER}}

80---

81kind: PersistentVolumeClaim

82apiVersion: v1

83metadata:

84 name: pvc-cfs

85 namespace: kube-system

86spec:

87 accessModes:

88 - ReadWriteMany

89 resources:

90 requests:

91 storage: 5Gi

92---

93kind: Deployment

94apiVersion: apps/v1

95metadata:

96 name: nfs-client-provisioner

97 namespace: kube-system

98spec:

99 selector:

100 matchLabels:

101 app: nfs-client-provisioner

102 replicas: 1

103 strategy:

104 type: Recreate

105 template:

106 metadata:

107 labels:

108 app: nfs-client-provisioner

109 spec:

110 serviceAccountName: nfs-client-provisioner

111 containers:

112 - name: nfs-client-provisioner

113 image: registry.baidubce.com/cce-plugin-pro/nfs-client-provisioner:latest

114 imagePullPolicy: Always

115 volumeMounts:

116 - name: nfs-client-root

117 mountPath: /persistentvolumes

118 env:

119 - name: PROVISIONER_NAME

120 value: {{PROVISIONER_NAME}}

121 - name: NFS_SERVER

122 value: {{NFS_SERVER}}

123 - name: NFS_PATH

124 value: {{NFS_PATH}}

125 volumes:

126 - name: nfs-client-root

127 persistentVolumeClaim:

128 claimName: pvc-cfs

129---

130kind: StorageClass

131apiVersion: storage.k8s.io/v1

132metadata:

133 name: {{STORAGE_CLASS_NAME}}

134provisioner: {{PROVISIONER_NAME}}

135parameters:

136 archiveOnDelete: "{{ARCHIVE_ON_DELETE}}"

137 sharePath: "{{SHARE_PATH}}"

138mountOptions:

139 - hard

140 - nfsvers=4.1

141 - nordirplusdynamic-cfs-template.yaml 模板文件中可自定义的选项及说明如下:

| 选项 | 说明 |

|---|---|

| NFS_SERVER | CFS 挂载点地址。 |

| NFS_PATH | CFS 远程挂载目录,注意该目录在使用前需要预先存在,如果目录不存在会导致 provisioner 插件启动失败。 |

| SHARE_PATH | 不同 PVC 的 CFS 挂载目录是否隔离,true-不隔离,false-隔离。若指定隔离,则会在 CFS 挂载目录下为每个 PVC 创建一个子目录,对应 PVC 使用该子目录作为挂载目录;否则所有 PVC 共享挂载目录。 |

| ARCHIVE_ON_DELETE | 删除 PVC 后是否保留对应数据,仅当 PVC 挂载目录隔离时生效,true-保留,false-不保留;PVC 挂载目录共享时,删除 PVC 不会删除任何数据。设置为不保留则直接删除对应 PVC 的子目录,否则仅将原子目录名加上 archive- 前缀后保留。 |

| STORAGE_CLASS_NAME | 创建的 StorageClass 名称。 |

| PROVISIONER_NAME | Provisioner 名称。 |

支持 shell 的系统中,可以直接使用下面的 replace.sh 脚本进行 yaml 模板中模板变量的替换操作。

1 # !/bin/sh

2 # user defined vars

3

4NFS_SERVER="cfs-test.baidubce.com"

5NFS_PATH="/cce/shared"

6SHARE_PATH="true" # 不同PVC的挂载目录是否隔离,true-不隔离,false-隔离

7ARCHIVE_ON_DELETE="false" # 删除PVC是否保留对应数据,仅当PVC挂载目录隔离时生效,true-保留,false-不保留

8STORAGE_CLASS_NAME="sharedcfs" # StorageClass名称

9PROVISIONER_NAME="baidubce/cfs-provisioner" # provisioner名称

10

11YAML_FILE="./dynamic-cfs-template.yaml"

12

13 # replace template vars in yaml file

14

15sed -i "s#{{SHARE_PATH}}#$SHARE_PATH#" $YAML_FILE

16sed -i "s#{{ARCHIVE_ON_DELETE}}#$ARCHIVE_ON_DELETE#" $YAML_FILE

17sed -i "s#{{STORAGE_CLASS_NAME}}#$STORAGE_CLASS_NAME#" $YAML_FILE

18sed -i "s#{{PROVISIONER_NAME}}#$PROVISIONER_NAME#" $YAML_FILE

19sed -i "s#{{NFS_SERVER}}#$NFS_SERVER#" $YAML_FILE

20sed -i "s#{{NFS_PATH}}#$NFS_PATH#" $YAML_FILE- 将脚本中前半段中的 shell 变量替换为期望值,将 replace.sh 脚本和 dynamic-cfs-template.yaml 文件放置在同一个目录下,执行

sh replace.sh即可。 - 或者采用其他方式,将模板 yaml 文件中的模板变量替换为期望值。

- 最后,使用 kubectl 工具,执行

kubectl create -f dynamic-cfs-template.yaml完成 StorageClass 和 Provisioner 的创建。

1$ kubectl create -f dynamic-cfs-template.yaml

2clusterrole "nfs-client-provisioner-runner" created

3clusterrolebinding "run-nfs-client-provisioner" created

4role "leader-locking-nfs-client-provisioner" created

5rolebinding "leader-locking-nfs-client-provisioner" created

6serviceaccount "nfs-client-provisioner" created

7persistentvolume "pv-cfs" created

8persistentvolumeclaim "pvc-cfs" created

9deployment "nfs-client-provisioner" created

10storageclass "sharedcfs" created

11$ kubectl get pod --namespace kube-system | grep provisioner

12nfs-client-provisioner-c94494f6d-dlxmj 1/1 Running 0 26s如果相应的 Pod 进入 Running 状态,则动态绑定 PV 所需的资源已经建立成功。

2. 创建 PVC 时动态生成 PV 并绑定

- 在 PVC Spec 中指定上面创建的 StorageClass 名称,则在创建 PVC 时,会自动调用相应 StorageClass 绑定的 Provisioner 生成相应的 PV 进行绑定。

- 使用 kubectl,执行

kubectl create -f dynamic-pvc-cfs.yaml完成 PVC 的创建。 - 假设创建的 StorageClass 名称为

sharedcfs,对应的 dynamic-pvc-cfs.yaml 文件如下所示:

1kind: PersistentVolumeClaim

2apiVersion: v1

3metadata:

4 name: dynamic-pvc-cfs

5spec:

6 accessModes:

7 - ReadWriteMany

8 storageClassName: sharedcfs

9 resources:

10 requests:

11 storage: 5Gi- 创建 PVC 后,可以看见相应的 PV 自动创建,PVC 状态变为

Bound,即 PVC 已经与新创建的 PV 绑定。

1$ kubectl create -f dynamic-pvc-cfs.yaml

2persistentvolumeclaim "dynamic-pvc-cfs" created

3$ kubectl get pvc

4NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

5dynamic-pvc-cfs Bound pvc-6dbf3265-bbe0-11e8-bc54-fa163e08135d 5Gi RWX sharedcfs 4s

6$ kubectl get pv

7NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

8pv-cfs 5Gi RWX Retain Bound kube-system/pvc-cfs 21m

9pvc-6dbf3265-bbe0-11e8-bc54-fa163e08135d 5Gi RWX Delete Bound default/dynamic-pvc-cfs sharedcfs 7s3. 在 Pod 内挂载 PVC

- 在 Pod spec 内指定相应的 PVC 名称即可,使用 kubectl,执行

kubectl create -f dynamic-cfs-pod.yaml完成资源的创建。 - 对应的

dynamic-cfs-pod.yaml文件如下所示:

1kind: Pod

2apiVersion: v1

3metadata:

4 name: test-pvc-pod

5 labels:

6 app: test-pvc-pod

7spec:

8 containers:

9 - name: test-pvc-pod

10 image: nginx

11 volumeMounts:

12 - name: cfs-pvc

13 mountPath: "/cfs-volume"

14 volumes:

15 - name: cfs-pvc

16 persistentVolumeClaim:

17 claimName: dynamic-pvc-cfsPod 创建后,可以读写容器内的 /cfs-volume 路径来访问相应的 CFS 存储上的内容。

4. 释放 PVC 时动态销毁绑定 PV

删除 PVC 时,与之绑定的动态 PV 会被一同删除,其中的数据则根据用户定义的 SHARE_PATH 和 ARCHIVE_ON_DELETE 选项进行相应的保留或删除处理。

1$ kubectl get pvc

2NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

3dynamic-pvc-cfs Bound pvc-6dbf3265-bbe0-11e8-bc54-fa163e08135d 5Gi RWX sharedcfs 9m

4$ kubectl get pv

5NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

6pv-cfs 5Gi RWX Retain Bound kube-system/pvc-cfs 31m

7pvc-6dbf3265-bbe0-11e8-bc54-fa163e08135d 5Gi RWX Delete Bound default/dynamic-pvc-cfs sharedcfs 9m

8$ kubectl delete -f dynamic-pvc-cfs.yaml

9persistentvolumeclaim "dynamic-pvc-cfs" deleted

10$ kubectl get pv

11NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

12pv-cfs 5Gi RWX Retain Bound kube-system/pvc-cfs 31m

13$ kubectl get pvc

14No resources found.方式二:通过控制台操作

- 登陆 CCE 控制台,单击集群名称进入集群详情页。

-

创建Provisioner。

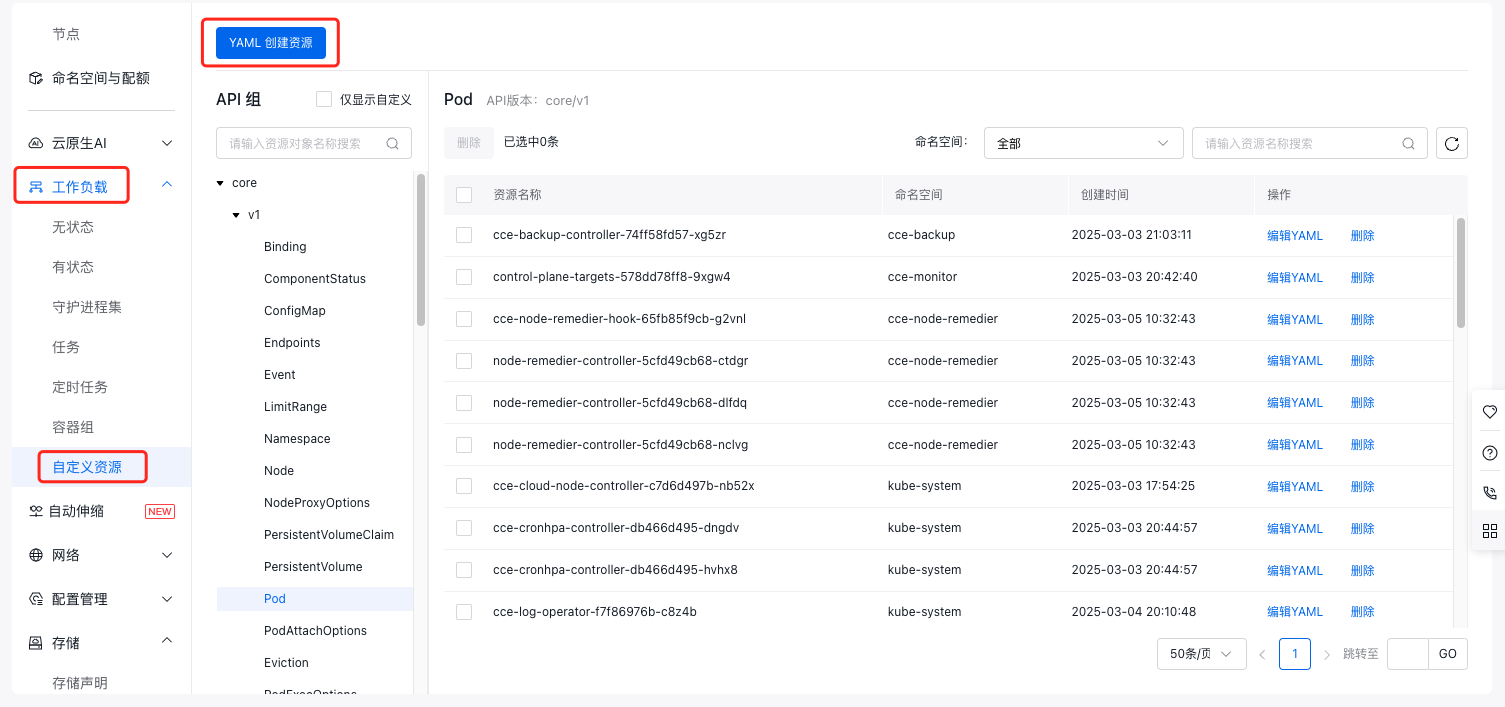

a. 在左侧导航栏中选择工作负载 > 自定义资源,进入自定义资源管理页面。

b. 点击页面上方YAML创建资源按钮,在弹框中分别创建以下资源。

YAML

YAML1kind: ClusterRole 2apiVersion: rbac.authorization.k8s.io/v1 3metadata: 4 name: nfs-client-provisioner-runner 5rules: 6 - apiGroups: [""] 7 resources: ["persistentvolumes"] 8 verbs: ["get", "list", "watch", "create", "delete"] 9 - apiGroups: [""] 10 resources: ["persistentvolumeclaims"] 11 verbs: ["get", "list", "watch", "update"] 12 - apiGroups: ["storage.k8s.io"] 13 resources: ["storageclasses"] 14 verbs: ["get", "list", "watch"] 15 - apiGroups: [""] 16 resources: ["events"] 17 verbs: ["create", "update", "patch"] 18--- 19kind: ClusterRoleBinding 20apiVersion: rbac.authorization.k8s.io/v1 21metadata: 22 name: run-nfs-client-provisioner 23subjects: 24 - kind: ServiceAccount 25 name: nfs-client-provisioner 26 namespace: kube-system 27roleRef: 28 kind: ClusterRole 29 name: nfs-client-provisioner-runner 30 apiGroup: rbac.authorization.k8s.io 31--- 32kind: Role 33apiVersion: rbac.authorization.k8s.io/v1 34metadata: 35 name: leader-locking-nfs-client-provisioner 36 namespace: kube-system 37rules: 38 - apiGroups: [""] 39 resources: ["endpoints"] 40 verbs: ["get", "list", "watch", "create", "update", "patch"] 41--- 42kind: RoleBinding 43apiVersion: rbac.authorization.k8s.io/v1 44metadata: 45 name: leader-locking-nfs-client-provisioner 46 namespace: kube-system 47subjects: 48 - kind: ServiceAccount 49 name: nfs-client-provisioner 50 # replace with namespace where provisioner is deployed 51 namespace: kube-system 52roleRef: 53 kind: Role 54 name: leader-locking-nfs-client-provisioner 55 apiGroup: rbac.authorization.k8s.io 56--- 57apiVersion: v1 58kind: ServiceAccount 59metadata: 60 name: nfs-client-provisioner 61 namespace: kube-system 62--- 63kind: PersistentVolume 64apiVersion: v1 65metadata: 66 name: pv-cfs 67spec: 68 capacity: 69 storage: 5Gi 70 accessModes: 71 - ReadWriteMany 72 persistentVolumeReclaimPolicy: Retain 73 mountOptions: 74 - hard 75 - nfsvers=4.1 76 - nordirplus 77 nfs: 78 path: {{NFS_PATH}} 79 server: {{NFS_SERVER}} 80--- 81kind: PersistentVolumeClaim 82apiVersion: v1 83metadata: 84 name: pvc-cfs 85 namespace: kube-system 86spec: 87 accessModes: 88 - ReadWriteMany 89 resources: 90 requests: 91 storage: 5Gi 92--- 93kind: Deployment 94apiVersion: apps/v1 95metadata: 96 name: nfs-client-provisioner 97 namespace: kube-system 98spec: 99 selector: 100 matchLabels: 101 app: nfs-client-provisioner 102 replicas: 1 103 strategy: 104 type: Recreate 105 template: 106 metadata: 107 labels: 108 app: nfs-client-provisioner 109 spec: 110 serviceAccountName: nfs-client-provisioner 111 containers: 112 - name: nfs-client-provisioner 113 image: registry.baidubce.com/cce-plugin-pro/nfs-client-provisioner:latest 114 imagePullPolicy: Always 115 volumeMounts: 116 - name: nfs-client-root 117 mountPath: /persistentvolumes 118 env: 119 - name: PROVISIONER_NAME 120 value: {{PROVISIONER_NAME}} # 指定 Provisioner 名称 121 - name: NFS_SERVER 122 value: {{NFS_SERVER}} # 指定 CFS 挂载点地址 123 - name: NFS_PATH 124 value: {{NFS_PATH}} # 指定 CFS 远程挂载目录,注意该目录在使用前需要预先存在,如果目录不存在会导致 provisioner 插件启动失败 125 volumes: 126 - name: nfs-client-root 127 persistentVolumeClaim: 128 claimName: pvc-cfs -

创建存储类 StorageClass。

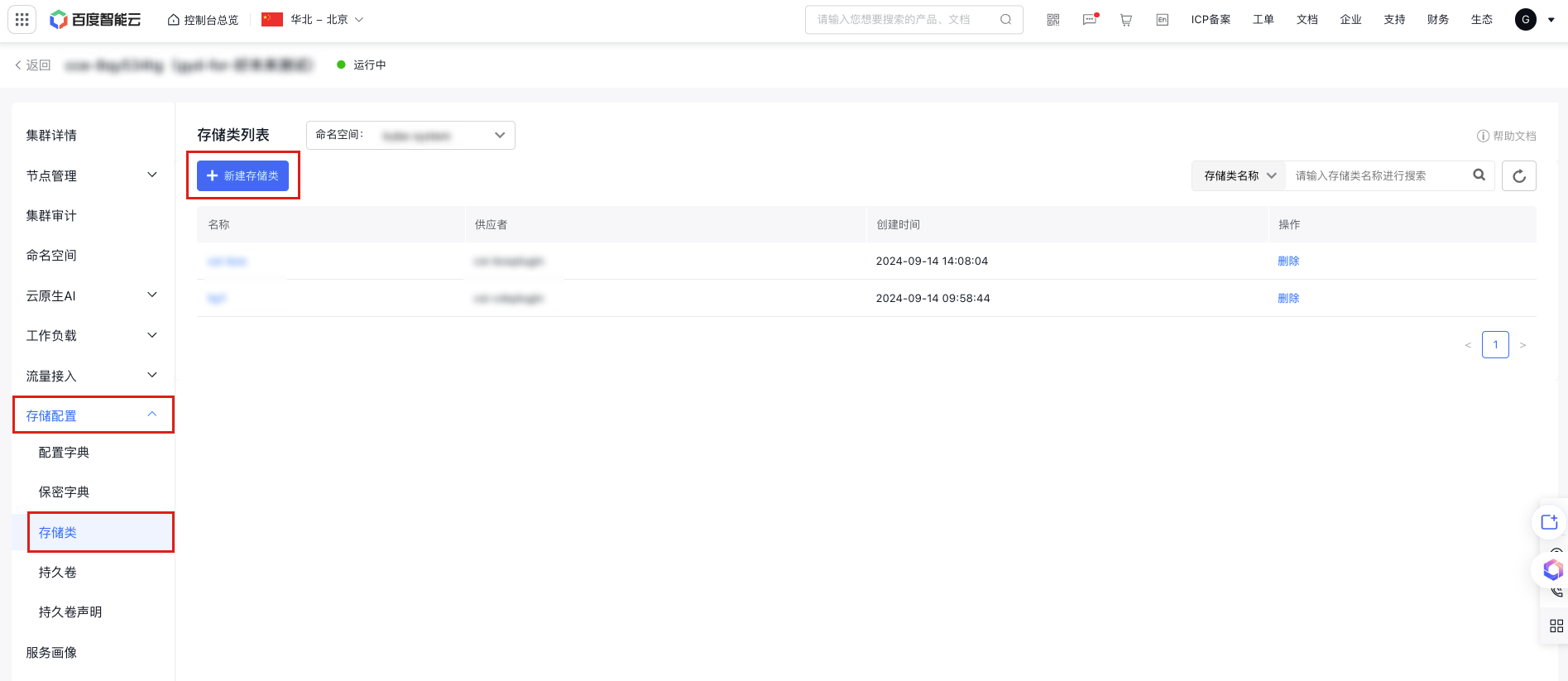

a. 在左侧导航栏中选择存储配置 > 存储类,进入存储类列表页面。

b. 点击存储类列表上方新建存储类按钮,在文件模版中输入以下 yaml 内容。

YAML

YAML1kind: StorageClass 2apiVersion: storage.k8s.io/v1 3metadata: 4 name: {{STORAGE_CLASS_NAME}} # 指定 StorageClass 名称 5provisioner: {{PROVISIONER_NAME}} # 指定创建的 Provisioner 名称 6parameters: 7 archiveOnDelete: "{{ARCHIVE_ON_DELETE}}" # 删除 PVC 后是否保留对应数据,仅当 PVC 挂载目录隔离时生效,true-保留,false-不保留;PVC 挂载目录共享时,删除 PVC 不会删除任何数据。设置为不保留则直接删除对应 PVC 的子目录,否则仅将原子目录名加上 archive- 前缀后保留。 8 sharePath: "{{SHARE_PATH}}" # 不同 PVC 的 CFS 挂载目录是否隔离,true-不隔离,false-隔离。若指定隔离,则会在 CFS 挂载目录下为每个 PVC 创建一个子目录,对应 PVC 使用该子目录作为挂载目录;否则所有 PVC 共享挂载目录。 9mountOptions: 10 - hard 11 - nfsvers=4.1 12 - nordirplusc. 点击确定,将为您创建存储类。

-

创建持久卷声明 PVC 后动态生成 PV 并绑定。

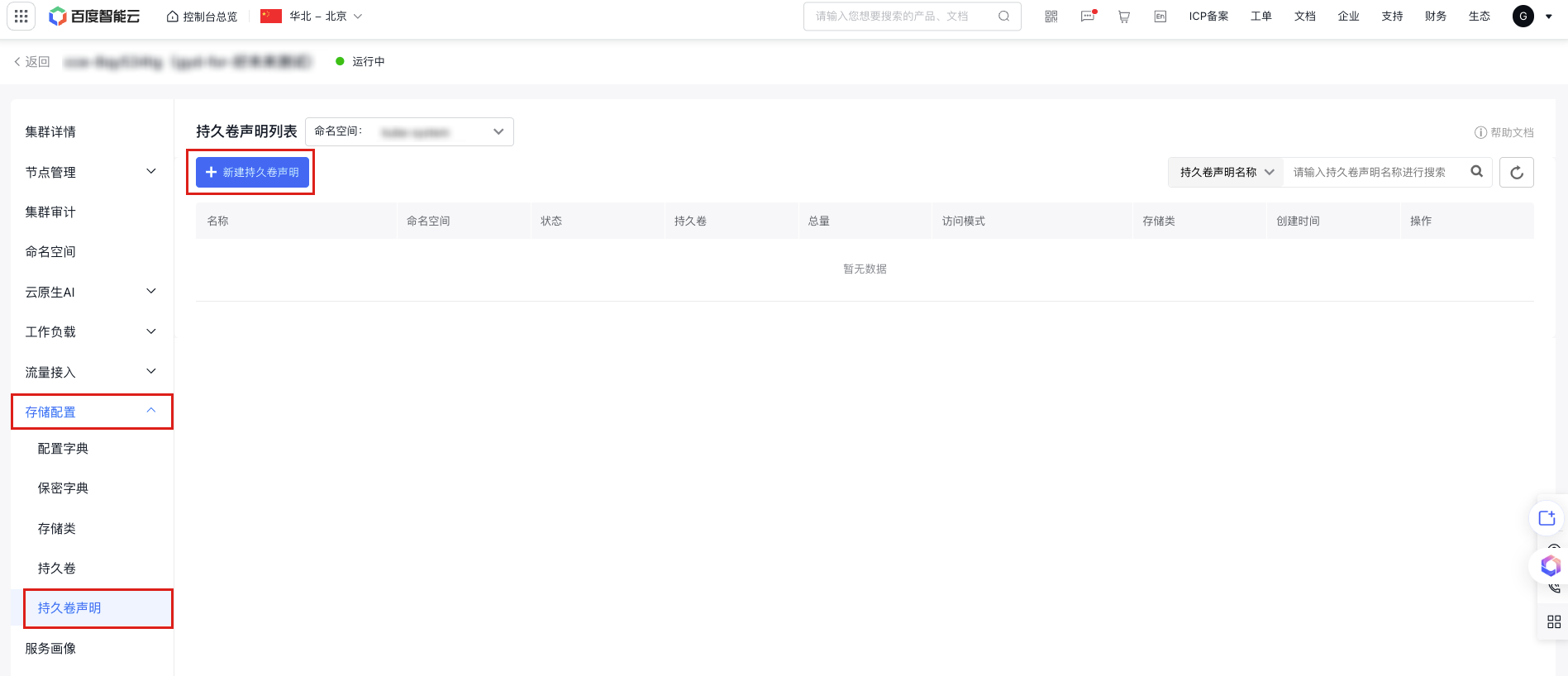

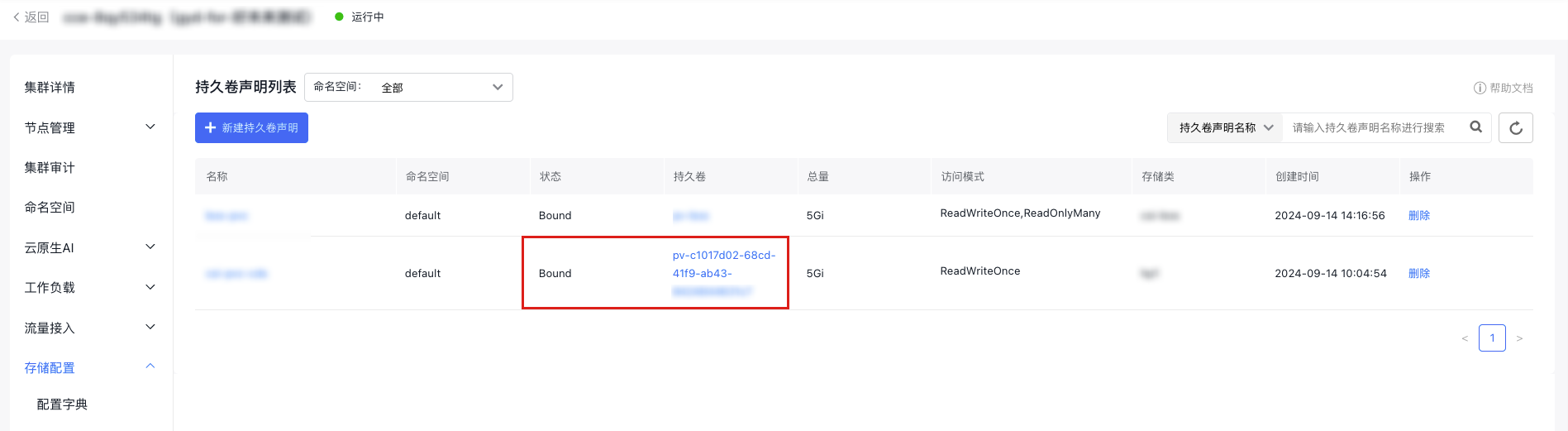

a. 在左侧导航栏选择存储配置 > 持久卷声明,进入持久卷声明列表。

b. 点击持久卷声明列表上方新建持久卷声明按钮,选择表单创建或 Yaml 创建。

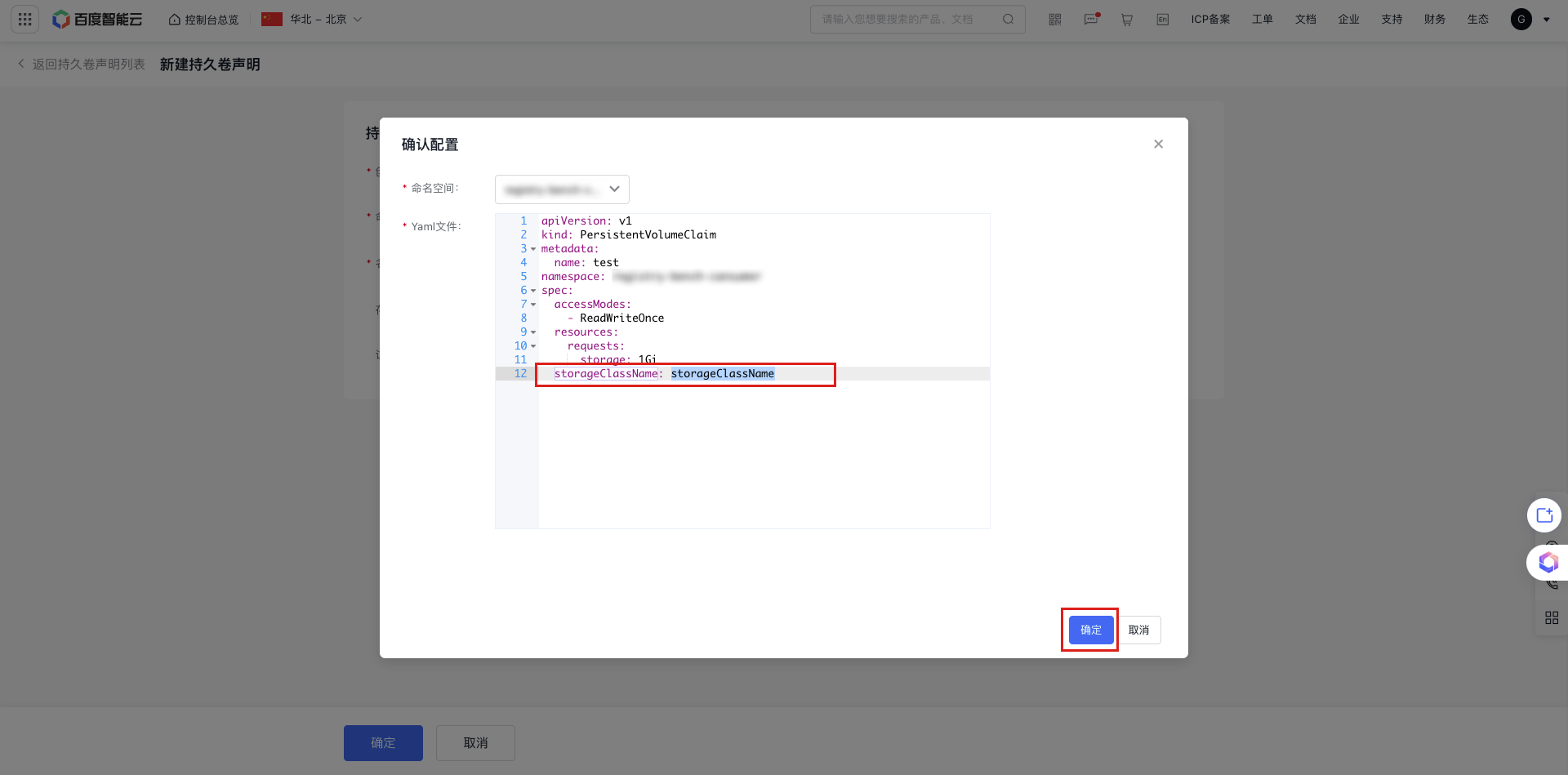

c. 若选择表单创建则需填写相关参数,其中三种访问模式详情参见存储管理概述,点击确定后在确认配置弹窗中输入

storageClassName的值,即存储类的名称。确认配置无误后点击确定。

d. 若选择YAML创建,在文件模版中输入以下 yaml 内容。

YAML1kind: PersistentVolumeClaim 2apiVersion: v1 3metadata: 4 name: pvc-cfs 5 namespace: kube-system 6spec: 7 accessModes: 8 - ReadWriteMany 9 storageClassName: {{STORAGE_CLASS_NAME}} # 指定创建的 StorageClass 名称 10 resources: 11 requests: 12 storage: 5Gie. 创建 PVC 后,在持久卷声明列表 > 持久卷列可以看见相应的 PV 自动创建,PVC 状态列显示为 Bound,即 PVC 已经与新创建的 PV 绑定。

- 创建应用并挂载 PVC,在 Pod spec 内指定相应的 PVC 名称即可。Pod 创建后,可以读写容器内的 /cfs-volume 路径来访问相应的 CFS 存储上的内容。

静态 PV/PVC 方式挂载 CFS

方式一:通过 kubectl 命令行操作

1. 在集群中创建 PV 和 PVC 资源

- 使用kubectl,执行

kubectl create -f pv-cfs.yaml完成 PV 的创建 - 对应的

pv-cfs.yaml文件如下所示:

1kind: PersistentVolume

2apiVersion: v1

3metadata:

4 name: pv-cfs

5spec:

6 capacity:

7 storage: 8Gi

8 accessModes:

9 - ReadWriteMany

10 persistentVolumeReclaimPolicy: Retain

11 mountOptions:

12 - hard

13 - nfsvers=4.1

14 - nordirplus

15 nfs:

16 path: /

17 server: cfs-test.baidubce.com注意:

- yaml 中 server 字段对应的是 CFS 挂载点地址。

- yaml 中 path 字段对应的是 CFS 挂载目录,该目录需要在挂载前预先存在。

创建 PV 后,输入kubectl get pv 可以看见一个 available 状态的 PV,如下所示:

1$ kubectl get pv

2NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

3pv-cfs 8Gi RWX Retain Available 3s建立一个能够与该 PV 绑定的 PVC

使用 kubectl,执行 kubectl create -f pvc-cfs.yaml 完成 PVC 的创建

对应的 pvc-cfs.yaml 文件如下所示:

1kind: PersistentVolumeClaim

2apiVersion: v1

3metadata:

4 name: pvc-cfs

5spec:

6 accessModes:

7 - ReadWriteMany

8 resources:

9 requests:

10 storage: 8Gi绑定前,PVC 为 pending 状态

1$ kubectl get pvc

2NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

3pvc-cfs Pending 2s 2s绑定后,PV 和 PVC 状态变为 Bound

1$ kubectl get pv

2NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

3pv-cfs 8Gi RWX Retain Bound default/pvc-cfs 36s

4$ kubectl get pvc

5NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

6pvc-cfs Bound pv-cfs 8Gi RWX 1m有关 PV 和 PVC 的更多设置和字段说明,参见k8s官方文档

2. 在 Pod 内挂载 PVC

在 Pod spec 内指定相应的 PVC 名称即可,使用 kubectl,执行 kubectl create -f demo-cfs-pod.yaml 完成 Pod 的创建

对应的 demo-cfs-pod.yaml 文件如下所示:

1kind: Pod

2apiVersion: v1

3metadata:

4 name: demo-cfs-pod

5 labels:

6 app: demo-cfs-pod

7spec:

8 containers:

9 - name: nginx

10 image: nginx

11 volumeMounts:

12 - name: cfs-pvc

13 mountPath: "/cfs-volume"

14 volumes:

15 - name: cfs-pvc

16 persistentVolumeClaim:

17 claimName: pvc-cfsPod 创建后,可以读写容器内的 /cfs-volume 路径来访问相应的 CFS 存储上的内容。

由于创建 PV 和 PVC 时指定了 accessModes 为 ReadWriteMany,该 PVC 可以被多节点上的 Pod 挂载读写。

3. 释放 PV 和 PVC 资源

完成存储资源的使用后,可以释放 PVC 和 PV 资源。在释放 PVC 和 PV 之前,需要先删除挂载了对应 PVC 的所有 Pod。

使用以下命令可以释放 PVC

1$ kubectl delete -f pvc-cfs.yaml释放 PVC 后,原来与之绑定的 PV 状态会变为 Release,如下所示:

1NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

2pv-cfs 8Gi RWX Retain Released default/pvc-cfs 16m输入以下指令释放 PV 资源

1$ kubectl delete -f pv-cfs.yaml方式二:通过控制台操作

- 登陆 CCE 控制台,单击集群名称进入集群详情页。

-

创建持久卷 PV。

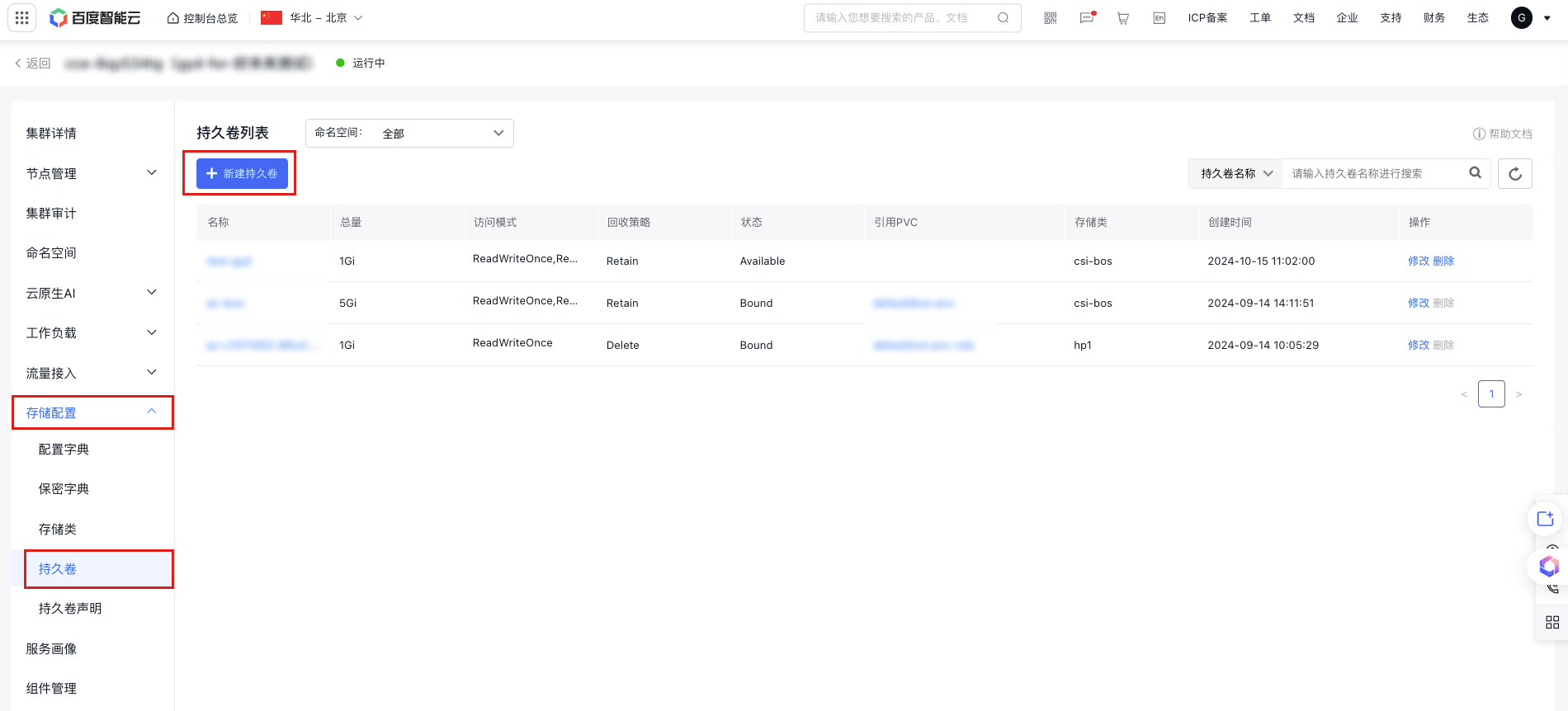

a. 在左侧导航栏选择存储配置 > 持久卷,进入持久卷列表。

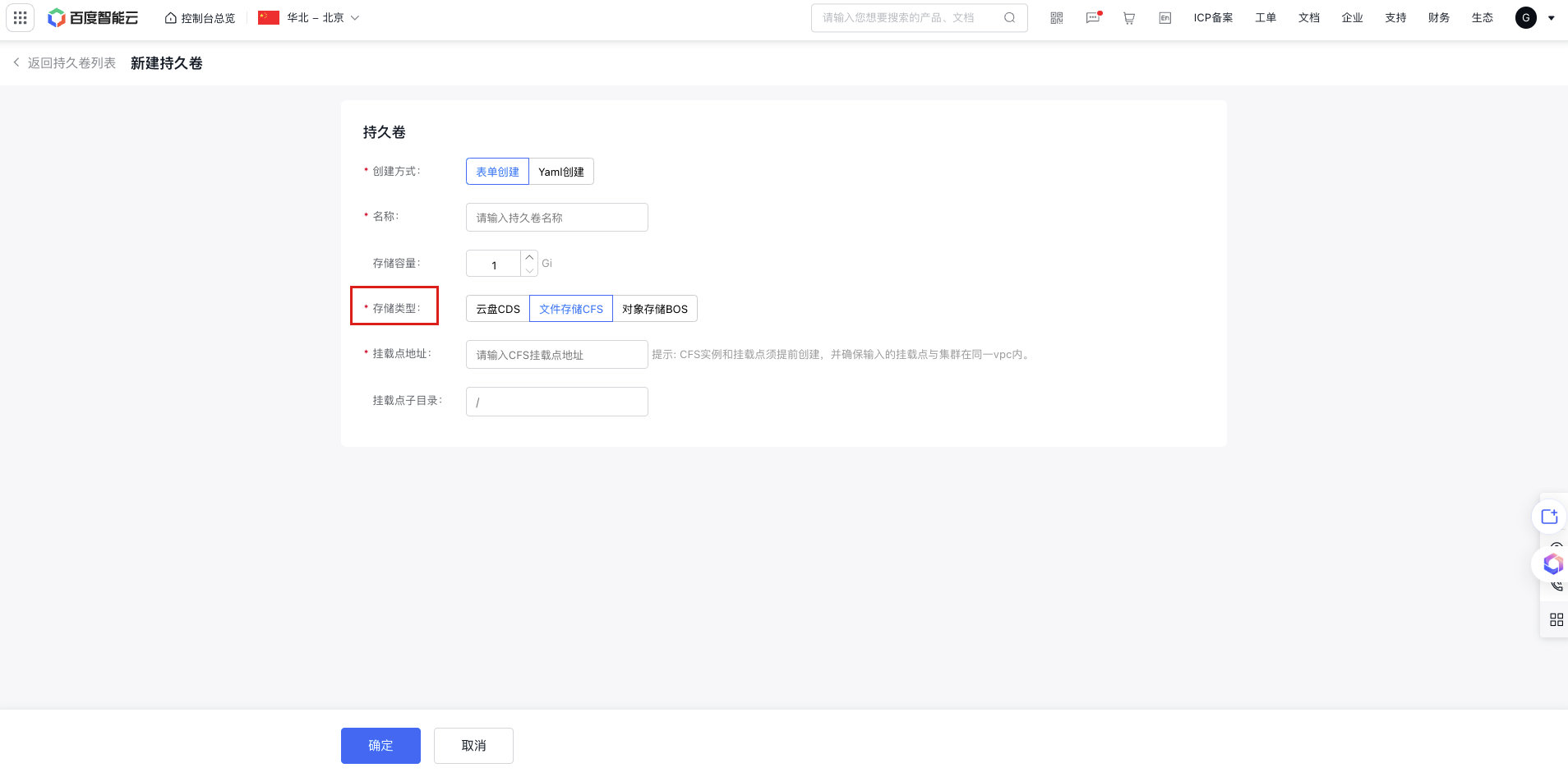

b. 点击持久卷列表上方新建持久卷按钮,选择表单创建或 Yaml 创建。

c. 若选择表单创建则需填写相关参数,根据需求设置存储容量,存储类型选择文件存储 CFS。挂载点地址可参见获取挂载地址。点击确定在二次弹窗中确认配置后点击确定创建 PV。

-

创建可以和持久卷 PV 绑定的持久卷声明 PVC。

a. 在左侧导航栏选择存储配置 > 持久卷声明,进入持久卷声明列表。

b. 点击持久卷列表上方新建持久卷声明按钮,选择表单创建或 Yaml 创建。

c. 根据之前创建的 PV 配置 PVC 的存储容量、访问模式、存储类(可选),点击确定创建 PVC。会查找现有的 PV 资源,寻找与 PVC 请求相匹配的 PV。

d. 绑定后,可以分别在持久卷列表和持久卷声明列表中看见 PV 和 PVC 的状态列变为 Bound。

- 创建应用并挂载 PVC,在 Pod spec 内指定相应的 PVC 名称即可。

评价此篇文章